|

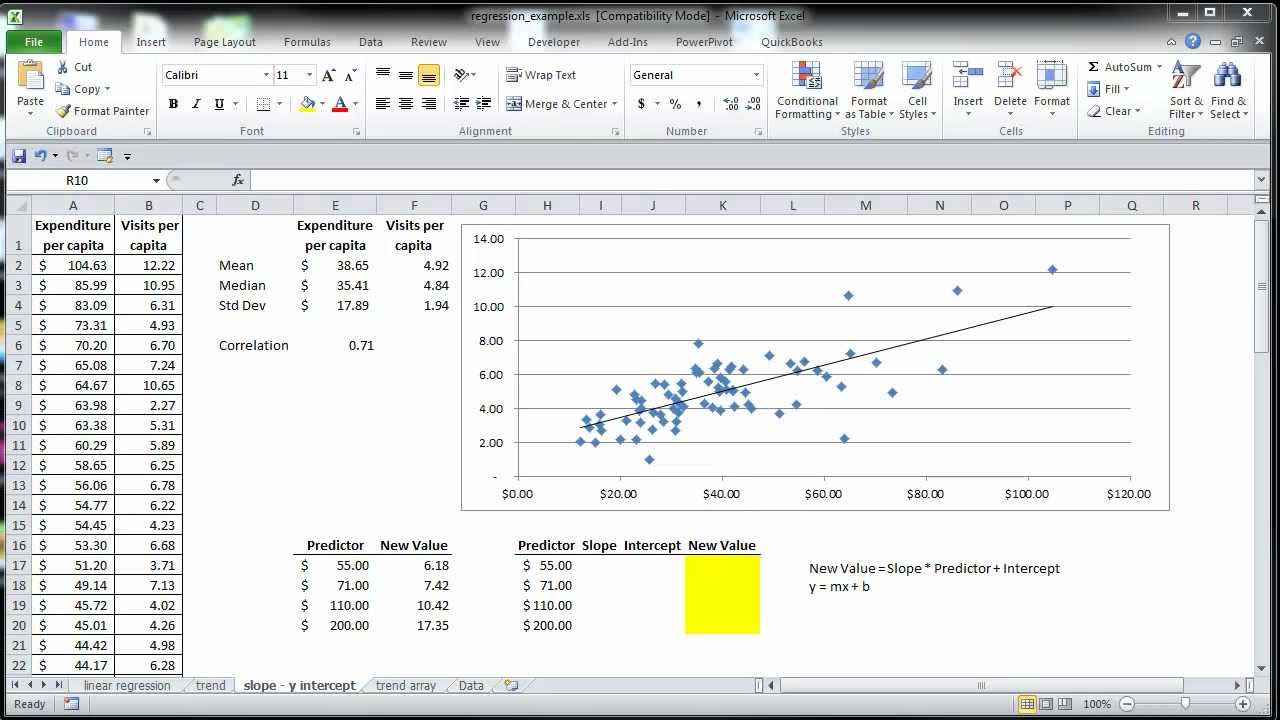

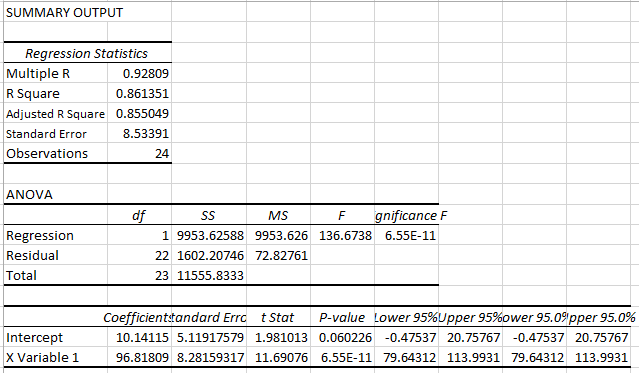

This change in the nature of the deviations always increases the total sum of squares. The reason is that the deviations that are squared and summed in Figure 3 are the differences between the values and zero, not between the values and their mean. They are both much larger than the sums of squares reported in Figure 2. Notice the values for the sum of squares regression and the sum of squares residual in Figure 3. Now examine the same sort of analysis shown in Figure 3.įigure 3 The deviations are centered on zero. It's all as is expected according to the mathematics underlying regression analysis. There's nothing magical about any of this. The square of that correlation, in cell H23, is 0.595-that is of course R 2, the same value that you get by calculating the ratio of the sum of squares regression to the total sum of squares. The former are calculated using Excel's matrix functions the latter are calculated using the LINEST function.Īlso notice in Figure 2 that the correlation between the actual and the predicted Y values is given in cell H22. Notice in Figure 2 that the statistics reported in G11:J15 are identical to those reported in G3:J7 (except that LINEST() reports the regression coefficients and their standard errors in the reverse of worksheet order). The result, 0.595, states that 59.5% of the variability in the Y values is attributable to variability in the composite of the X values. That is, R 2 is the ratio of the sum of squares regression to the total sum of squares of the Y values. One useful way to calculate that figure (and a useful way to think of it) is: The value in cell G13, 0.595, is the R 2 for the regression. The sums of squares are calculated by means of the DEVSQ() function, which subtracts every value in the argument's range from the mean of those values, squares the result, and sums the squares. They are based on the predicted Y values, in L21:L40, and the deviations of the predicted values from the actuals, in M21:LM40. In Figure 2, cells G15:H15 contain the sums of squares for the regression and the residual, respectively.

Others, including myself, believe that if setting the constant to zero appears to be a useful and informative option, then linear regression itself is often the wrong model for the data.įigure 2 The deviations are centered on the means. Some credible practitioners believe that it's important to force the constant to zero in certain situations, usually in the context of regression discontinuity designs.

It extends to the whole area of regression analysis, regardless of the platform used to carry out the regression. In fact, the question is not limited to LINEST() and Excel. And there is a real question of whether the const argument is a useful option at all. Setting the const argument to FALSE can easily have major implications for the nature of the results that LINEST() returns. stats, if TRUE, tells LINEST() to include statistics that are helpful in evaluating the quality of the regression equation as a means of gauging the strength of the relationship between the Y values and the X values.If const is FALSE, the constant is omitted from the equation.

If const is TRUE or omitted, the constant is calculated and included. const is either TRUE or FALSE, and indicates whether LINEST() should include a constant (also called an intercept) in the equation, or should omit the constant.X values represents the range that contains the variable or variables that are used as predictors.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed